Photo by Scott Graham on Unsplash

Dev's Journal 1

Default Framework, Proxies for Testing, and Polygon Nightfall

I've been trying to write more, but the market is down and the devs need to do something. So, I've been doing that instead.

I keep feeling the pull though. Therefore, I'm going to start a weekly publication called: Dev's Journal. To start with, I'm going to compile a list of interesting development problems, solutions, and new things I learn each week. The format could vary over time, but the goal is to keep working and write about it. This will differ from my other content in that the bar is lower for what I’ll publish to get in a rhythm. Specifically, I won’t try to ensure I’ve covered a topic front to end or as deep as a dedicated post. It’s more of a way to share current thoughts as they’re a work in the progress.

For those who are finding this later or don’t know me: I’ve been a smart contract developer for almost a year, working primarily in Solidity. My main area of focus has been DeFi, but I plan to expand this over time. I’m also interested in L2 solutions, gaming and creative industry applications, and how blockchain expands to mainstream use cases. I’m currently working with OlympusDAO and learn with a group of other devs in Solidity Guild.

With that out of the way, here’s this week’s Dev's Journal:

Building with: Default Framework (a protocol design pattern)

At OlympusDAO, we've recently started using a variation of the Default Framework created by fully for the development of a new systems with the goal of eventually migrating the entire protocol.

The general idea is to separate shared protocol state from business logic, similar to how many web application design patterns are structured (e.g. Model-View-Controller). Additional goals are to abstract hard-coding/manually setting dependency addresses and centralize access control for shared state updates.

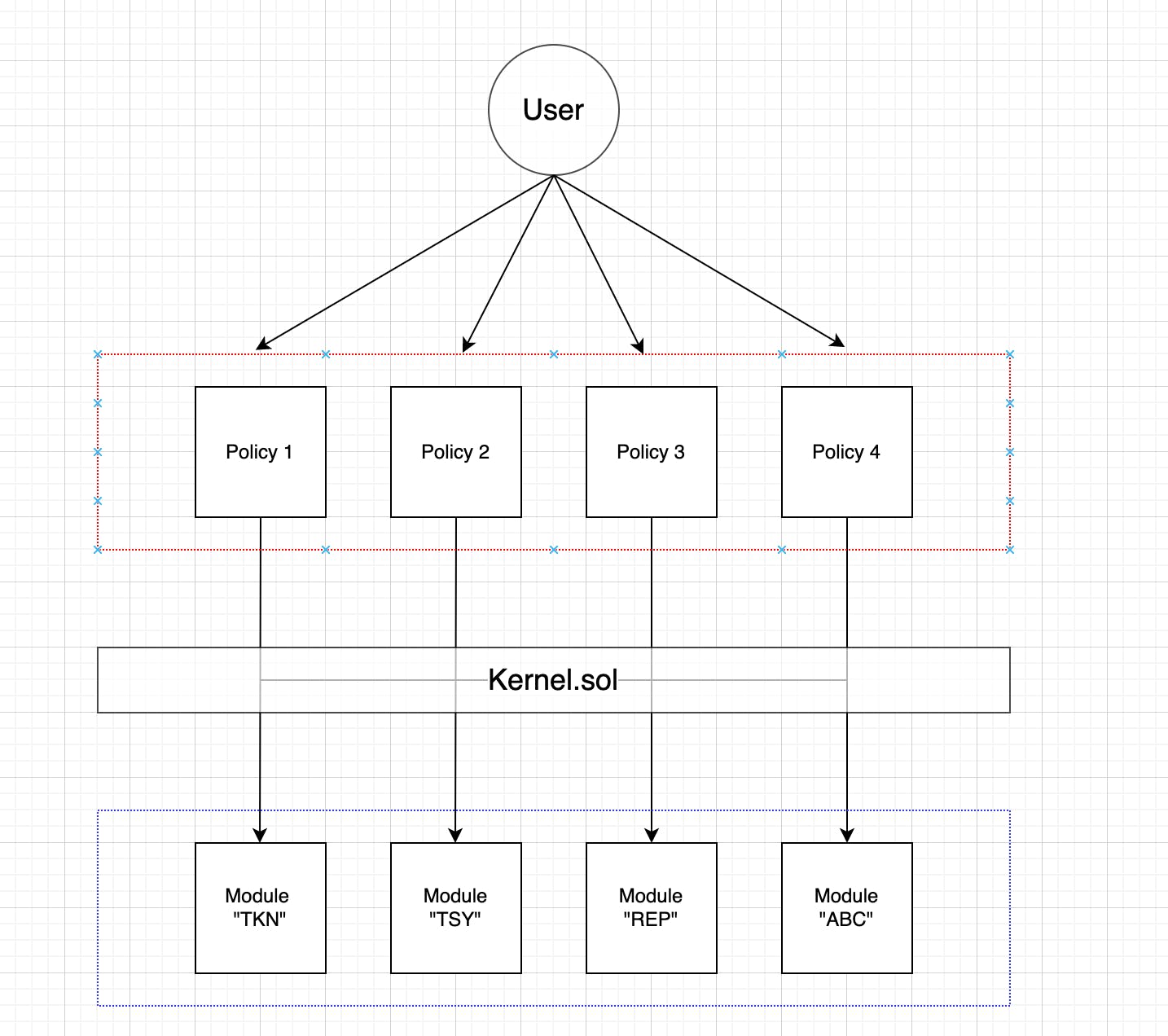

There are three types of contracts in the design pattern:

- Modules - store shared state and provide methods for updating it

- Policies - implement business logic and read/write to Modules

- Kernel - a singleton contract that registers Modules and Policies and configures read/write access for Policies to Modules

Modules are self-contained within the system (although they can make external calls to other contracts, the pattern limits this to contracts not in the boundary of your system). Each module has a 5-character key code which it can be referenced by from Policies without having to pass around contract addresses.

Policies look and act more like typical monolithic smart contracts. They have some configuration to request read and write access for the modules they require to function properly. Policies can be tiered if needed (have a Policy that calls other Policies as well as Modules), but this should be avoided if possible to preserve the benefits of the design. An example of an exception I think makes sense is consolidating Keeper functions on multiple Policies into one contract to pay out rewards from a single contract.

A general architecture using the Default Framework may look like this (credit to fully for the image):

Structuring your system this way has several benefits...

- Provides a guide for developers to structure their data consistent across a protocol (especially important with multiple contributors to a codebase)

- Helps avoid spaghetti code by providing clear directions of data flow. This is in contrast to how many existing smart contract systems work where logic, state, and external calls are inconsistently coupled, creating many dependencies.

- As certain design patterns gain popularity, familiarity with them among the development community will allow others to more quickly understand a system and either build on top of it or contribute to the project.

and some drawbacks...

- Current implementations assume a consistent interface for Module contracts, which requires updating Policies that read from Modules if their interface changes. This would happen in any smart contract system, but it limits the benefits of having a service architecture.

- Write access for Policies on a Module is all or nothing currently. Providing granular write access is likely preferred overtime with the downside of requiring extra configuration on a Policy.

We're going to be launching multiple new contracts using this framework over the next few months, and I'm excited to see how it allows more composability and extensibility for future work.

Created: General proxy for testing Modules

Motivation

In the Default Framework, functions gated on Modules with the onlyPermitted modifier can only be called by Policies with write permissions for that Module. Therefore, in order to test them atomically, you need to mock a Policy contract and give it those write permissions. This could be done for each Module independently and would require a bunch of tedious repetition, but I found a better way.

Solution

The general idea is to create a proxy for the Module contract that you need to test and make the proxy a Policy with the correct permissions. The proxy uses a generic fallback with the expanded fallback syntax (accepts parameters and return values) which also elevates custom errors sent from the Module. In a sense, it's a complete passthrough. In the test file, the mock is created (which is a Policy) and given the proper access control permissions through the Kernel. Then a reference is stored to it aliasing the address to the Module interface. Therefore, the reference has the correct interface to call the gated functions against.

Here's the code:

/**

* @notice Mock policy to allow testing gated module functions

*/

contract MockModuleWriter is Policy {

Module internal module;

constructor(Kernel kernel_, Module module_) Policy(kernel_) {

module = module_;

}

/* ========== FRAMEWORK CONFIFURATION ========== */

function configureReads() external override onlyKernel {}

function requestWrites()

external

view

override

onlyKernel

returns (bytes5[] memory permissions)

{

permissions = new bytes5[](1);

permissions[0] = module.KEYCODE();

}

/* ========== DELEGATE TO MODULE ========== */

fallback(bytes calldata input) external returns (bytes memory) {

(bool success, bytes memory output) = address(module).call(input);

if (!success) {

if (output.length == 0) revert();

assembly {

revert(add(32, output), mload(output))

}

}

return output;

}

}

Let's breakdown the fallback function since that's the most interesting component:

- The calldata (which includes the function selector and the arguments) gets passed to the stored Module address with a low-level call.

- The call returns a boolean of whether the call succeeded or not (

success) and a bytes payload (output). - The

outputcan contain return values (if successful), an error message (if failed), or be empty (no return value or error message). - In the case that the call fails (success is false), we want to revert from the proxy contract with the same error message as the call.

- First, we check to see if the

outputis empty. If so, we simply revert. - If not, one way to get the error is to use some inline assembly (Yul) to load it from the

output.- The Yul command

revert(p, s)allows us to revert the error once retrieved. The arguments arep, the pointer to where the error byte array (bytes) starts, ands, the size of the byte array. - The

outputbyte array has the size of the array stored in the first 32 bytes. The Yul commandmloadwill take the first 32 bytes of a dynamically-sized array into memory, so we can usemload(output)to retrieve the size. - The error is then stored after the size of the byte array, starting at byte 32. We can therefore get the pointer to the start of the array by adding 32 to the byte array pointer (

add(32, output)). - Putting it all together, you revert the output error by

revert(add(32, output), mload(output))

- The Yul command

- First, we check to see if the

- This code was taken from this Stack Exchange post and the OpenZeppelin Address.sol contract.

One weird quirk with this type of fallback function right now is that it is not properly formatted by the prettier-plugin-solidity library, which is popular among newer code bases. I ended up making an exception for this file in a .prettierignore file to avoid CI issues. I tracked the error to the solidity parser used by the library and created an issue to hopefully get it resolved soon (the team seems great and responsive so I'm sure it will).

Researching: Polygon Nightfall

I've spent most of my career working around large organizations and with enterprise software. Enterprises have different priorities than other customers when choosing a software, a big one being data privacy (although many consumers are caring more about this now). The emphasis here comes from either compliance with laws and regulations or for competitive purposes.

Additionally, I have followed the development of Ethereum scaling solutions at a cursory level and am keen to understand how these efforts are progressing.

As such, the recent launch (and previous announcement) of Polygon Nightfall piqued my interest. The dev elevator pitch is: Nightfall is an optimistic L2 rollup that uses zero-knowledge proofs to provide privacy for rolled up transactions*. The asterisk is because the transactions are currently limited to token transfers and there are some technical challenges which could make the UX less than desirable in certain situations. Additionally, privacy is only enabled once the tokens are deposited into the rollup from Ethereum Mainnet and withdrawals can reveal some information about what you've received since the outbound data will not be shielded (an obvious consequence of Mainnet not being private).

The zero-knowledge proofs work by using a primitive called a Commitment. To simplify, a Commitment represents a bucket of sorts that has an owner and a balance of a token. A user can end up with multiple commitments for the same token and certain transfer amounts are limited by being able to only send tokens from up to 2 commitments at a time. You also cannot empty a commitment completely unless you transfer the whole thing to a new owner (which is the simplest version of a transfer).

The stated enterprise use cases for Nightfall are supply chain and procurement applications, which Ernst & Young launched product offerings for an initial application on Nightfall. Additional use cases are NFT marketplaces, private token transfers (e.g. mixing or anonymizing). I am personally interested in the procurement applications, since it's a process that consumes a large amount of resources at public and private sector companies.

Overall, I think Nightfall will improve over time and offer a compelling solution for enterprises that want to leverage the security of a public blockchain and not worry about operating a private one. I intend to give it a whirl on the testnet when I find some time and determine if applications can be built which abstract the transfer logic a bit, perhaps leveraging multiple sequential transactions to find optimal paths to transfer a certain amount.

Currently Reading

Fiction

- Snow Crash - Neal Stephenson. A bit of a cult-classic that I decided to dive into. It's been interesting so far to see the metaverse concepts imagined in the book (which was published in the early 1990s) be realized in different ways with blockchain and VR technology. Unfortunately, as with most stories involving a metaverse, there is a dystopian bent to it.

Non-fiction

- How to Take Smart Notes - Sonke Ahrens. I've read pieces of this over time, but I've been revisiting it recently with the goal of expanding my technical knowledge base through writing.

- Hyperstructures - jacob.eth. Interesting essay on the philosophy of building protocols as public goods infrastructure and the characteristics they should have.